- Emily's Newsletter

- Posts

- Blockchain + AI: The Underrated Combo That's Changing Compute Efficiency

Blockchain + AI: The Underrated Combo That's Changing Compute Efficiency

Tech Topic #2: Federated Learning Models

This newsletter will cover another tech topic about using blockchain and AI together through federated learning models. These models aren’t mainstream yet, so diving into this now puts you ahead of the curve!

Federated Learning Models (FLM’s) can do the following:

Minimize compute power costs for ML models

Keep data private

Unlock new business models

This newsletter is the 2nd tech topic newsletter, so I hope you all enjoy reading this and learn something!

What are Federated Learning Models?

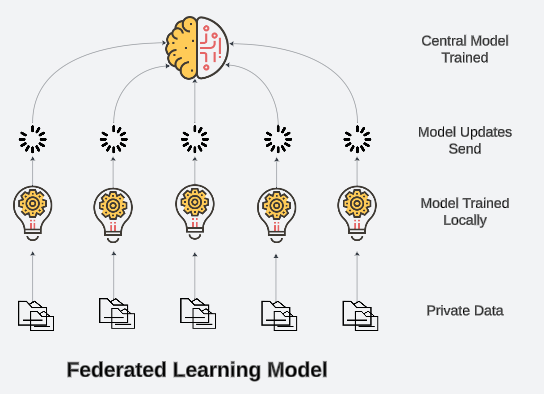

Federated Learning Models are a decentralized way of training large models while keeping contributors’ data private and while only sharing model updates (not raw data). This prevents centralized data collection while still allowing AI improvements.

Minimize Compute Costs: distributed computed cuts down on energy and hardware expenses

Enhanced Data Privacy: personal or sensitive data stays local (not shared to central database)

New Business Models: companies can collaborate securely to solve shared challenges

How Do FLM’s Work?

How it Works [2]:

Global AI model is sent to multiple devices or nodes ( e.g. phones, IoT devices, servers) [2]

Each device trains the model locally using its own private data [2]

Instead of sending raw data back to a central server, the model updates (gradients) are sent back [2]

The central AI model aggregates updates from all devices to improve performance [2]

The updated model is sent back to devices, repeating the cycle [2]

According to [1], Federated Learning models—specifically FL-E2WS—have achieved up to a 70.12% reduction in energy consumption and improved global model accuracy by 10.21%. Compared to other FL versions, it also slashes energy use by 38.21%. These numbers are insane, especially considering how energy constraints are the biggest bottleneck in AI scaling right now [1].

Even more surprising is the 10.21% accuracy boost—despite not using raw data directly to update the model [1]. Normally, FL is expected to trade off some accuracy, but this improvement might be due to reduced bias from decentralized training.

I highly recommend checking out this paper—and even the open-source code—because this is seriously overlooked right now. (See citations).

Real World Applications

Here are some cool applications of this privacy technology:

Privacy-Preserving Voice Assistants: FLMs can help improve voice assistants such as Alexa without compromising user data. Interactions with the assistant stay private, while the product algorithm gets smarter.

Decentralized Personal Media: Platforms, such as Bluesky and Farcaster, are exploring decentralized algorithms. They improve user experience without selling or exposing personal data.

Large Company Savings: Some companies requiring large amounts of user data will save significant costs if they use FL as an alternative to current energy-inefficient models.

What’s Next?

In the following newsletters, I will continue sharing bi-weekly AI & Blockchain topics, my student in tech journey, and will strive to be a source of encouragement for you guys!

Coming up, you can expect another student-in-tech update—this time about ETH Denver (in ~3 weeks)!

Feel free to follow this newsletter if you want to see more!

Citations

[1] R. R. de Oliveira, K. V. Cardoso, and A. Oliveira-Jr, "Improving Energy Efficiency in Federated Learning Through the Optimization of Communication Resources Scheduling of Wireless IoT Networks," arXiv preprint arXiv:2408.01286, Aug. 2024. [Online]. Available: https://doi.org/10.48550/arXiv.2408.01286.

[2] V. Chugani, "Federated Learning: A Thorough Guide to Collaborative AI," DataCamp, Oct. 4, 2024. [Online]. Available: https://www.datacamp.com/blog/federated-learning

[3] C. Ying et al., "BIT-FL: Blockchain-Enabled Incentivized and Secure Federated Learning Framework," IEEE Transactions on Mobile Computing, vol. 24, no. 2, pp. 1212-1225, Feb. 2025. [Online]. Available: https://tsapps.nist.gov/publication/get_pdf.cfm?pub_id=956862